Google Open sources MapReduce to run Native code

Feb 2016

As per the announcement Google has open sourced Map-Reduce framework formerly written in native Java to allow users to run over their native code in the Hadoop platforms.

Problems Faced by Hadoop:

1) Low Performance

2) Scalability Issues

Because of all such issues being faced Google revealed 'MR4C' formerly known as Map Reduce for C developed by Skybox Imaging for processing of large-scale satellite image and geospatial data for use.

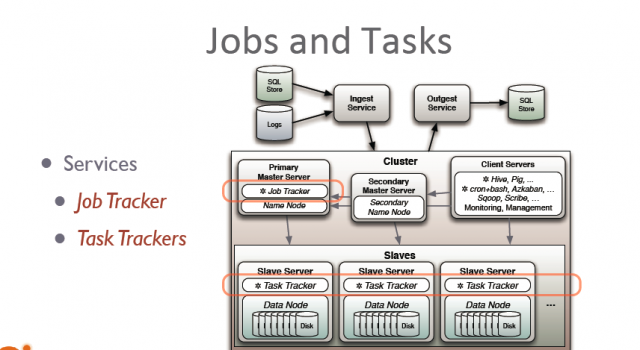

Due to its Job tracking and Cluster management capabilities has impressed Google to consider it as a powerful solution for handling scalable data but on contrary of this it also wants to hold its sway on the robust image processing libraries that are written in C and C++.

Though companies have built up their own exclusive system to achieve this MR4C offers more broader solution that lets you save pretty amount of time while working along with larger space of datasets.

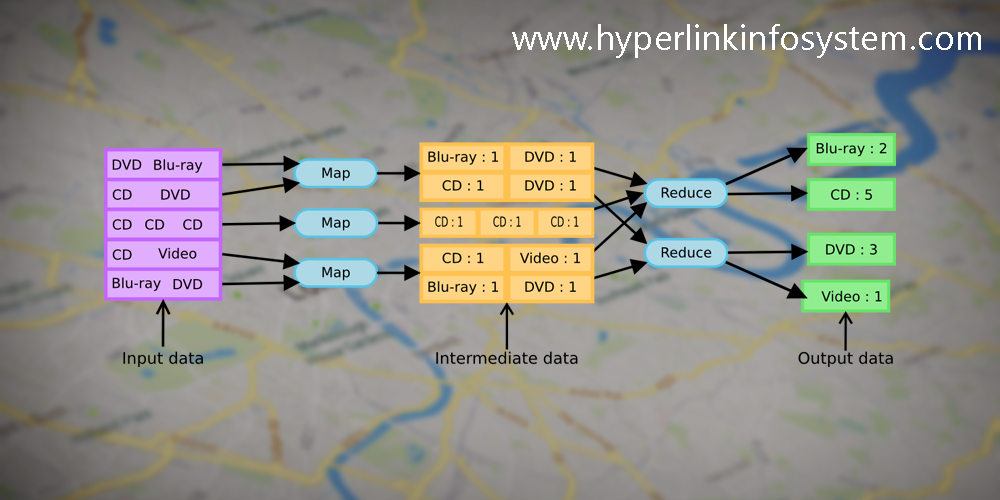

Understanding Concept of MR4C Execution

Here algorithms are stored inside native shared object that access your data either from Local file system or from any Uniform Resource Identifier (URI).

Input/Output datasets, runtime arguments or any kind of external libraries are configured using Json files (JavaScript object Notation).

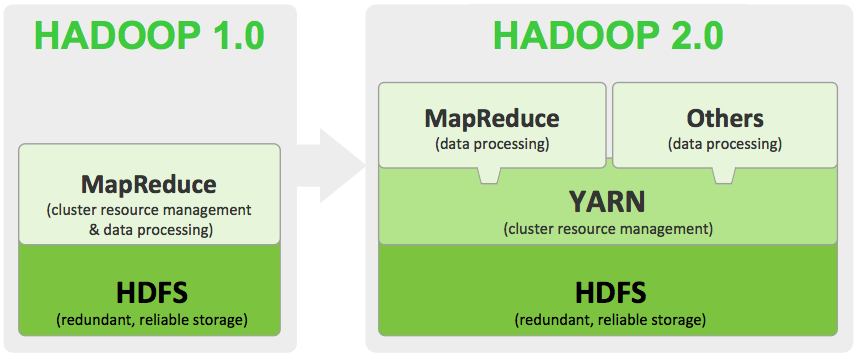

To split mappers and allocation of resource can be easily configured using Hadoop YARN tool or at the cluster level of Mrv1.

Multiple algorithm work flow can be linked together using an auto-generated configurations.

There are Hadoop Job Tracker Interface that lets you view callbacks and various progress reporting.

You can also built and test your work flow on local machine using same interface as of targeted cluster.

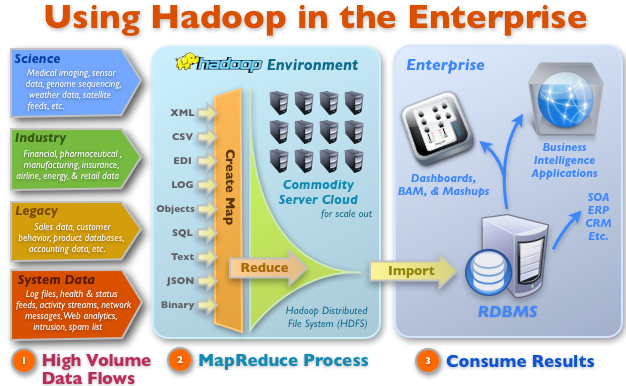

Using Hadoop MapReduce at Enterprise Level:

Below figure will Let you understand working of Map Reduce Work at enterprise level

Now ,what is the interesting folk is that MR4C is not the first time Google has handpicked for use of native C++ code for hadoop.Quantcast File system which is an alternative for HDFS are also written using C++ language keeping in mind its performance benefits.

Facebook also uses the same ideology in concern to it's HipHop system that converts data into C++ from SQL and reason behind this is the performance benefits.

Testing of MR4C

Testing of MR4C is been performed on:

Ubuntu 12.04

CentOS 6.5 Linux Oses

Cloudera CDH Apache Hadoop distribution

.png)

An important gossip that is highly highlighted these days is that Apache Spark , fastest framework than that of Map Reduce is seeking lot of attention these days but decline to support C/C++ as native code. It however do support Python, Java, Scale . Now, lets see how much traction Map Reduce for C, or MR4C, gets and by whom, can turn out to be a pretty big deal for App Development India.

So Now you are almost aware about all the rumors you have met across. Just imagine a situation where you develop large no of apps on any platform lets say Android, iOS, or windows you do linked a call to appropriate services but what when it comes for storage of huge dataset of your cross-platform application..? Don't Worry Hyperlink Infosystem one of the top app development companies will take care of it on behalf of you. Be a part of Hyperlink Infosystem to implement MR4C and Apache to your application . Contact us for Free Quote to Enhance your dataset Storage..!

Latest Blogs

Is BlockChain Technology Worth The H ...

Unfolds The Revolutionary & Versatility Of Blockchain Technology ...

IoT Technology - A Future In Making ...

Everything You Need To Know About IoT Technology ...

Feel Free to Contact Us!

We would be happy to hear from you, please fill in the form below or mail us your requirements on info@hyperlinkinfosystem.com

Hyperlink InfoSystem Bring Transformation For Global Businesses

Starting from listening to your business problems to delivering accurate solutions; we make sure to follow industry-specific standards and combine them with our technical knowledge, development expertise, and extensive research.

4500+

Apps Developed

1200+

Developers

2200+

Websites Designed

140+

Games Developed

120+

AI & IoT Solutions

2700+

Happy Clients

120+

Salesforce Solutions

40+

Data Science